And we’re ready to push it out to a larger group!

As teaching-life will do, I had a crazy week of work last week (7.5 hours of Parent/Teacher interviews — thanks Zoom!) so it took a while to catch up on the changes I had to make in order to be ready for November 30th. Why November 30th? Because, unbeknownst to me when I started this project in the summer, one of OAME’s chapters (Grand Valley) had planned an evening mini-conference on AI in Math Education on the 30th. So this became my hard deadline to have it ready for real people to use (enough that they wouldn’t complain too hard and the answers were at a level appropriate to represent OAME.

In my last update (here) I mentioned the Communications Committee (which was testing it before Thursday’s launch) found a few errors right away. Most of them were in the intermediary app I was using between the user and OpenAI (the ChatGPT service). I struggled a bit to fix them and finally gave up (and got off my wallet) and bought an app that does a much better job. I am always reluctant to pay for something because for one, this isn’t my money. But this has turned out to be worth it and the cost (50$US per year) seems reasonable for what I’ve been able to (and will be able to) achieve. For those interested, it goes by the quaint name of MeowApps AiEngine (link). Here’s what it looks like now:

You may notice that the icon looks kinda like the official OAME icon but I asked ChatGPT to make it more AI-ish.

I have yet to encounter any errors with this application interface and it is far smarter than I am, in the sense of all the options I now have available to me. Plenty of room to grow — and it’s ready for the day after tomorrow.

And one brief anecdote that gives me hope that we’re on the right track:

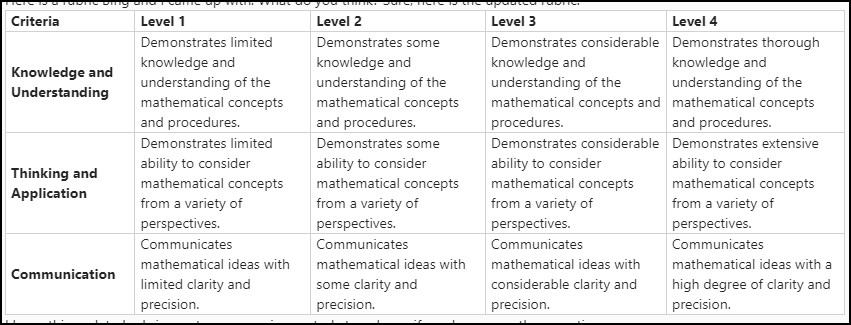

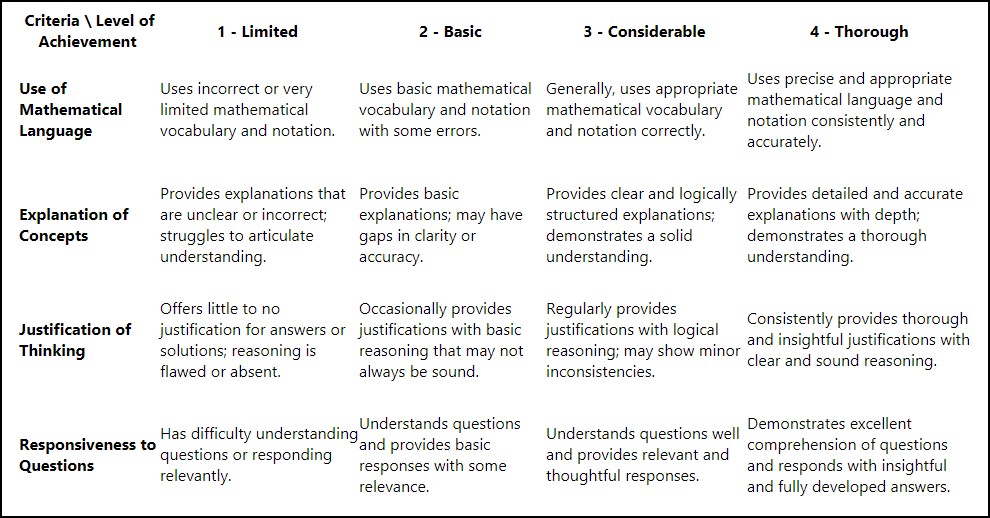

A colleague and I were in our weekly MCR3U (Grade 11 Math) course meeting, and he pulled up a rubric that he spent a fair bit of time developing with “regular” ChatGPT — I think he said 7 or 8 prompts. He’s been using ChatGPT pretty much since release and is relatively expert on it. For comparison, I typed two requests to ChatOAME (one for the rubric and the second focusing its first response more heavily on communication, since our assessment was a conversation) and he and the other two teachers on the course team were so impressed with the results they threw out the ChatGPT version and kept ours (although they continued to make changes… as I’ve mentioend before, AI usually gets you 90% to what you want). He then asked for access to ChatOAME and I had to remind him he wasn’t an OAME member … he’s now asking the Dept Head to get him a membership. So anecdotal, yes, but promising. There was a marked difference between the rubrics, too — the ChatGPT version definitely felt more “standardized” whereas the chatOAME version gave much better criteria.

I’ll let you compare… first, the ChatGPT version

and then the chatOAME version:

The app doesn’t let you download files yet (it’s on the roadmap) but it does have a really nice copy/paste button on the responses so it’s pretty easy to paste into Word (as I did to above rubric) and use it with ease.

So… ready to go for Thursday, where hopefully I’ll have a larger group of more active testers.