So I started working in AI and chatbots about 7 years ago and had a fair bit of success in building a service that provided answers to school-based questions (“When and where is the School Play being performed?“, “What is the homework policy for grade 9 students?“, etc) and how to use Microsoft “smart” tools (at that time) in my mathematics classes. I presented on my work at the NCTM and OAME conferences in 2018. Unfortunately, a new Chief Technology Officer came on board and cancelled all my projects (cough, and me) so I haven’t played much until ChatGPT came on the scene.

During an online meeting with Microsoft in the Spring, we were talking about the abilities that Microsoft was developing for schools and it struck me that teachers need to take steps to ensure that good, meaningful education was embedded into AI. Not that Microsoft’s work wasn’t good but they tend to focus on the tool and not the content. When you look at mathematics content on the web, there’s a lot of stuff that teachers likely only use out of convenience: TeachersPayTeachers exists for a reason. Once publishers start pushing out their AI tools (for free, or bundled with their other materials), it’s going to be focused on American content, standardized testing, rote practice, etc. And, ChatGPT (and many other Large Language Models) are based on a wide and diverse collection of content from the web — including Reddit and other social media platforms — that may not reflect quality mathematics education. Even if their answers don’t explicitly include unhelpful responses, the mathematics of what is happening behind the scenes is tilting towards some comment about how students just need to 100 factoring questions.

So, in response to that thought, I chatted with the OAME (Ontario Association for Mathematics Education, of which I am a board member) about starting a special project to create an Ontario Math Lens to AI — whenever a teacher (or student?) asks a question, they will get a response that is framed by Ontario approaches to curricula, assessment, educational philosophy, indigenous aspects, social-emotional beliefs, and resting on a solid foundation of research and the Mission and Vision of the OAME. As well, the OAME has a 50-year-deep library of their journals (The Gazette and the elementary-focused Abacus) that are a rich reserve of mathematical content and activities.

Mission Statement

OAME Guiding Principles

The mission of OAME/AOEM is to promote, support, and advocate for excellence in mathematics education throughout the Province of Ontario.

A Vision for Learning Mathematics

OAME/AOEM envisions an equitable mathematics education community in which everyone experiences high quality, research-informed, and engaging activities that promote critical thinking while fostering a positive attitude towards mathematics.

Strategic Priorities

OAME/AOEM is committed to the following Strategic Priorities:

Promoting and advocating for equity in mathematics education

Supporting all OAME members by providing value for membership, including quality professional learning, resources, and opportunities to network

Advocating for high quality, research-informed practices while building a community to support mathematics education.

The OAME were on-board after I outlined the project and its intent, so I spent the summer doing research and prepping my proposal; fortunately, the OAME has a Special Project Fund that supports these kinds of things. I submitted it and, on October 18th it was approved!

My first attempt was with a Chatbot service MyAskAI (https://myaskai.com/) — I can’t praise these folks enough. Their interface is dead-simple, they have excellent and responsive support system and even on-boarding calls with the staff so that you can walk through your idea and get feedback. It was very helpful to ensure that we were approaching it correctly, had our documents prepped accordingly and could keep our costs in line (that last one is important, as future posts will discuss).

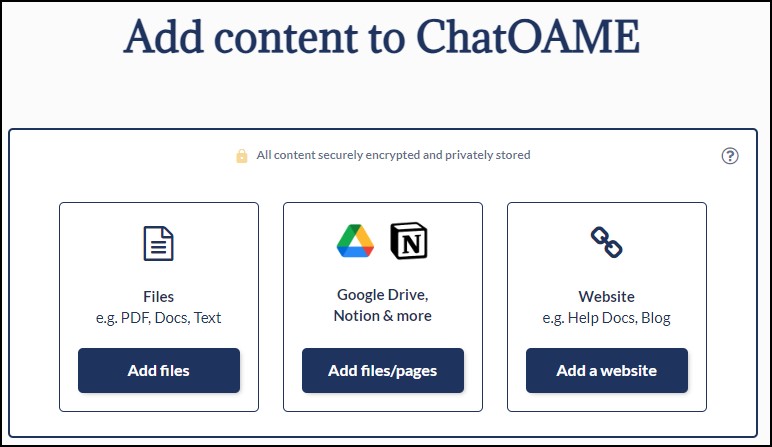

The first production step was to track down content — fortunately, all of the OAME Gazette and Abacus are already in PDF form (more on that later, too) and the OAME website contains much of the other content. Now, some of it is behind membership paywall so it had to be pulled out as text or PDF. As you’ll notice, you can connect a GoogleDrive to the Chat service, so all the Abacus and Gazette PDFs were just put in a folder and included.

For the Ministry content, anything that could be downloaded as PDF was, and then MyAskAI allows you to parse a website. I did that for both the Ministry Elementary, Secondary and Assessment sites and the OAME public-facing pages.

Then, I tried to dig a little deeper. and pulled foundational texts from Dewey, Piaget, Vygotsky, Papert, etc. And that started the question — what rubric do we use to put in as part of the OAME lens? Those listed seemed incontestable but I know that won’t be true in general — what about Pegagogy of the Oppressed? But, in general, I was looking for content that was referenced by, supported by content in Growing Success (Ontario’s guide to assessment), and OAME content. In total, I put in about 850 pieces of information. How we’ll work out our rubric is one of the many pieces still in development. The good thing is that, unlike our own memories, we can pull content out of the AI and it no longer considers it.

I began to play with the environment – – there is a System Message that tells the Chatbot what it is and how it’s meant to work and in what manner it is meant to respond (this is before you put any kind of prompt in… think of it as the guiding principles of the chatbot that you as the user can try to adjust, but this is how the chatbot believes it should respond). This System Message for OAME talks about teaching mathematics well, that it should be based in research, respond to Ontario curricula, indigenous approaches, and ensure all answers are correct and verifiable with references. The chatbot can still misbehave, but crafting this message is important and it continues to develop as we test the model.

Then I began to test out responses (okay, I’d been doing that all along as the number of pieces I had loaded in grew) and it was great for theoretical questions like “What would be a way to improve the assessment of my students?” and it could respond to some questions like “I need a good activity for quadratic trinomail factoring” … but it fell short on a lot of practical questions that active teachers are going to ask “I need a question on linear systems involving a basketball team”. Because MyAsk.AI could only create content based on the documents I had put in, it couldn’t get creative — and its very use-case is to prevent creativity. It doesn’t want to hallucinate so it responds that it can’t answer that particular question. If there was an activity already in the Gazette or Abacus, or a reference to a lesson in the curricula, etc, then it responded well. But if no one in those 850 pieces of information had talked about a box-and-whisker plot in MTH1W, we were out of luck.

This was anticipated — when I wrote the proposal, I explained how we have to balance accuracy with usability, and while we should start with a strict resource-based tool and see how far we can push it, the experiment would likely fail and we would have to move to a different tool. That’s where we are now — moving to using a tool that keeps the OAME lens but then pushes it out to ChatGPT to allow it to get creative. I’ll talk about that in my next post.

Now, MyAskAI is a great tool — just not for our purpose. However, when I was talking with the support at MyAskAI, they mentioned using it for a conference, and that sounds like an awesome idea for the OAME conference. Since participants are only looking for information on the conference (who, what, when, where, how?) this will be a great tool to use, and I look forward to testing it for that.

Stay tuned for what we are up to now in later posts.