My eyes have gotten tired from rolling as I read the myriad of thoughts expressed as ChatGPT arose in the public consciousness. I’d been working with Chatbots for the past six or seven years, even presenting at an NCTM conference about the progress I’d made. Unfortunately, my School shut down their Edtech/IT program, so I’ve only been able to observe and fiddle with what was being released by myself. I’ll leave the on-going discussion of ChatGPT to hoi polloi; this is about something along the path that interests me specifically.

Elementary and High School Mathematics that we assign students can be done by technology –it’s been that way for decades. Mathcad, Maple, Mathematica … then PhotoMath put it in their pockets. Our goal, though, is (or should be) not really the answer to the questions we pose, it’s developing students’ ability to problem solve, to read with purpose, to explain logically, to think with clarity and to communicate effectively. (There is an asterisk here, of course — folks involved in the exploration of math and science need the structures we teach to build their fields.) Now, we may not all agree with the government’s chosen curricula (I certainly don’t!) but we make do with what we have. So the doing of math by tech has already been dealt with.

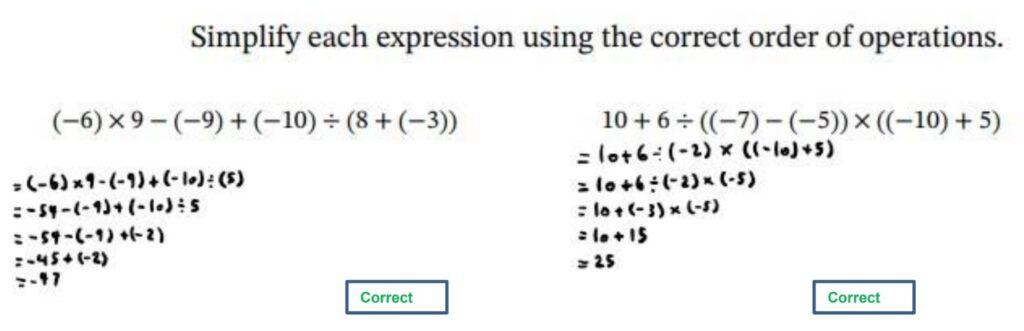

Students get better with feedback and most existing programs as listed above only offer the answer, even a full solution, but without the student’s work being involved. They can compare, but good luck making that happen on a regular basis. So I was pleased to see MathGrader show up. It purports to give feedback on student written work.

Two important caveats: (1) it is slow with about an 8-hour turn around and (2) it has almost no information about the company itself — the terms & conditions and privacy policy are not active and no matter when I send a problem, there are 1613 problems ahead of me in the queue. It looks dodgy.

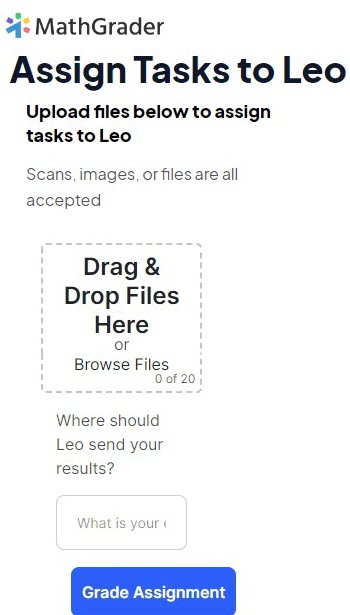

It calls its Ai-grader “Leo” and you can upload images of student work to their website. Even that webpage lacks a bit of polish.

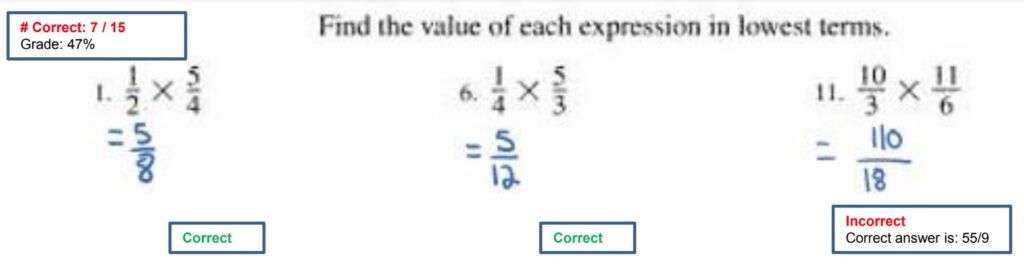

However, it does seem to do an adequate job at determining the correctness or incorrectness of answers that students have written in their OneNote. I wouldn’t say it’s providing much feedback though.

In the example above, all the feedback that the student needs is a reminder to reduce the fraction to lowest terms… perhaps even provide a notation that both numerator and denominator are even. But at the moment, it’s just right or wrong.

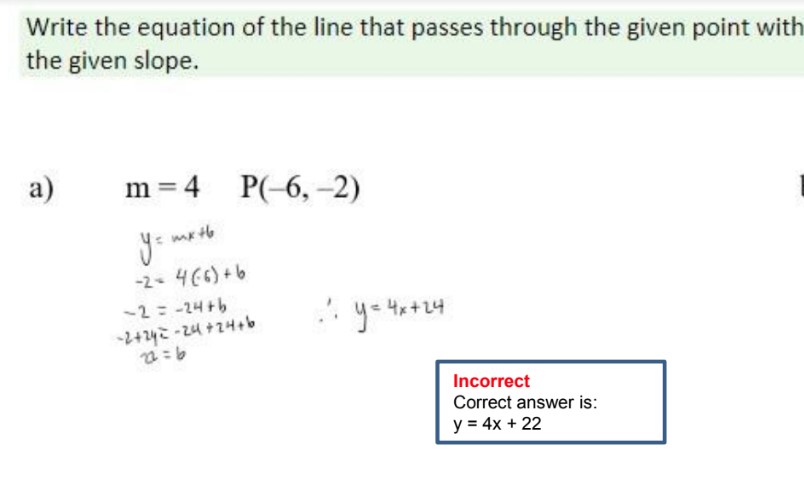

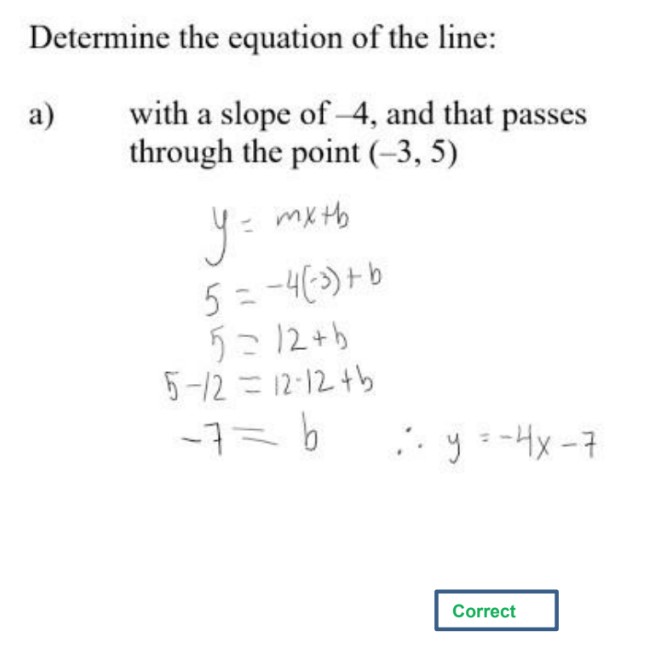

Likewise, in this example, the student correctly found the y-intercept but then copied the answer down wrong! I’m not meaning to criticize MathGrader here — this is the first step in what will be a long road.

It only does pre-Calculus work. When I asked it to do Calculus and Vectors questions, it politely replied it couldn’t answer those types of questions yet.

This capability will eventually be built into OneNote — they already have the ability to “read” & understand written mathematics in the program and Microsoft’s work with their various Coaches point in that direction. But I am glad to see other companies exploring this.

I will admit, giving the dodginess of the website, there is a thought that these questions are being marked by a cadre of actual people while the company collects a lot of student work to actually build their engine. Since I’m not including student names, or even using my school email, I’m not too worried about it just yet. And Lord knows I sent enough information to Google, Microsoft, Facebook and Garmin that a little more to another company is rounding error at this point.

If you’d like to give MathGrader a try, you can use this link:

https://mathgrader.com/ref636wk It purports (okay, maybe overusing that word) to be a reference that will accelerate my questions in their queue but given the challenge with credibility, I have my doubts. Try it out with anonymous student work to see what you get back.

And let’s see where this will lead.

—————

Catching up: I did have a chance to speak to one of the developers. We talked a fair bit about the usability of the product and how it could assist teachers. They’re at the beginning of a long trail and I look forward to following them (and others who are engaged in this development).